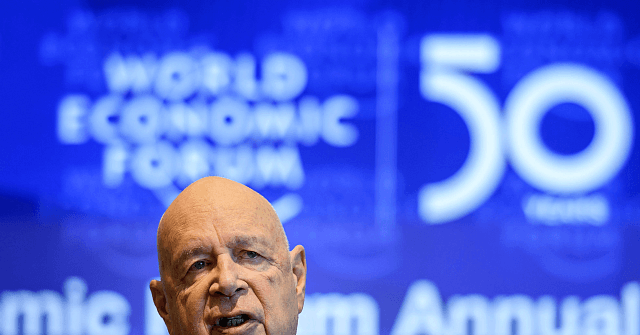

'Near-Perfect Detection:' World Economic Forum Pushes AI Censorship of Online Speech

The World Economic Forum (WEF), notorious for its “great reset” agenda, featuring the now-infamous slogan “you will own nothing and be happy,” has published an article pushing for artificial intelligence-powered censorship to contain the problem of “online abuse.” The article, published on the WEF’s website, bundles together the real problems faced by online content moderators, such as detecting and removing child sexual abuse material (CSAM), with establishment preoccupations like containing “misinformation” and “white supremacy” — increasingly flexible labels that tech elites use to censor the enemies of progressivism. Joe Biden arrives on stage to address the assembly on the second day of the World Economic Forum, on January 18, 2017 in Davos. (Photo by FABRICE COFFRINI/AFP via Getty Images) Via the WEF: Since the introduction of the internet, wars have been fought, recessions have come and gone and new viruses have wreaked havoc. While the internet played a vital role in how these events were perceived, other changes – like the radicalization of extreme opinions, the spread of misinformation and the wide reach of child sexual abuse material (CSAM) – have been enabled by it.

The article goes on to recommend the increased adoption of a technique already used by Silicon Valley leftists — using feedback from content moderators (who are typically either leftist or following leftist guidelines from social media companies) to train AI censorship models. To overcome the barriers of traditional detection methodologies, we propose a new framework: rather than relying on AI to detect at scale and humans to review edge cases, an intelligence-based approach is crucial. By bringing human-curated, multi-language, off-platform intelligence into learning sets, AI will then be able to detect nuanced, novel online abuses at scale, before they reach mainstream platforms. Supplementing this smarter automated detection with human expertise to review edge cases and identify false positives and negatives and then feeding those findings back into training sets will allow us to create AI with human intelligence baked in. This more intelligent AI gets more sophisticated with each moderation decision, eventually allowing near-perfect detection, at scale. Leftists in tech are increasingly fixated on owning and imprinting their biases on the field of artificial intelligence.

The field of “machine learning fairness,” which blends critical race theory with computer science, is one such example of this. A devotee of the field, former Google employee Meredith Whittaker, is now a member of Joe Biden’s FTC. Allum Bokhari is the senior technology correspondent at Breitbart News. He is the author of #DELETED: Big Tech’s Battle to Erase the Trump Movement and Steal The Election.

Read the full article at the original website

References:

- https://www.weforum.org/great-reset/

- https://www.weforum.org/agenda/2022/08/online-abuse-artificial-intelligence-human-input

- https://www.breitbart.com/tech/2020/10/03/bokhari-critical-race-theorists-control-the-big-tech-algorithms-that-control-us/

- https://www.breitbart.com/tech/2021/11/02/ftc-to-hire-leftist-wacko-meredith-whitaker-former-google-worker-who-attacked-women-for-supporting-trump/

- https://www.amazon.com/DELETED-Techs-Battle-Movement-Election/dp/154605930X